Home Server

For a long time I've had a 2010 Mac mini sitting around the house. I bought it second hand, and upgraded it after a few years. Before my upgrades it was almost unusable, it took so long to boot up or do anything. From Apple's planned obsolescence, updating generally gave me some extra features, but it slowed down each time (although El Capitan was a tiny improvement). I upgraded the RAM (3GB to 8GB), and I put in a 250GB SSD, making it a bit work for a uni project in 2015. I generally used it from time to time to dabble with iOS programming and to test features of Mac OSX, but it really wasn't used much at all.

In March 2018 I was inspired to work on my system admin skills, and the Mac mini seemed perfect for a simple home server. I had no trouble formatting the drive and installing other OS's, I have no real attachment to OSX. Thankfully the BIOS of the Mac mini makes it easy to choose boot drives, so it was simple to boot from USB drives I'd made. Firstly I tried Ubuntu server, it seemed like a pretty good choice, with lots of community support and documentation. I wasn't impressed though, the installation was strangely slow, and it was becoming obvious that even with a headless install the CPU in the Mac mini was working hard (the computer was basically becoming a strong room heater). I figured that because Ubuntu server was made for modern server PCs, it probably wasn't the most efficient when run on an 8 year old Mac mini. It also had a huge problem connecting to my networks, the final nail in the coffin.

After looking into options for older hardware, I decided to try Lubuntu. Making bootable USBs with Rufus isn't hard, so I created a Lubuntu USB. This time, the installer was much faster than the Ubuntu Server one, and the computer didn't get ridiculously hot. Lubuntu is of course not a headless distro, but for the purposes of this home server, I'm not going to worry too much about this. Lubuntu is very simple and made to run well on older hardware, so the desktop environment doesn't use too much of the resources, and this is very easy to see on the Mac mini. Using Firefox that came with the Lubuntu install, I could see it ran about 2-3 times as fast as it did with Mac OSX while doing some simple web browsing. For the initial setup of the server, I had the Mac mini attached to an old Dell VGA monitor. At this stage the DE was very useful to (more quickly) overcome problems.

My initial goals for the server were: a LAMP stack, a personal wiki, Nextcloud, and an SSH server running on start up. Basically things to allow the server to be something I can turn on and off at whim (to save power), knowing it will automatically start all the required services at start up.

The first task was installing the rest of the LAMP stack (the Linux part was done). This was easy to do, it's something I've done a number of times. After installing PHP, I also installed phpmyadmin, software I really appreciate for its help in troubleshooting and setting up databases. Something that used to confuse me when I first started with LAMP servers was symlinks. Now though, I understand them well and it was no problem in making the symlink to the /var/www/html/ folder. The only issue that came up was a 403 error when I tried to access phpmyadmin through a browser. I assumed this was a permissions problem because the owner of the phpmyadmin folder symlinked to the Apache folder was root, and the www-data user that delivered pages did not have access. Instead of searching online for a solution like normal, I tried something and found that changing the owner of the symlink to www-data solved the problem (I feel it's safe enough as I added a username and password in .htaccess to add another layer of security to the folder).

With phpmyadmin working, next was installing the mediawiki software. I often read Wikipedia articles, and I use wikis of other projects often, so I felt it would be nice to have a private wiki for my own thoughts and information. The installation was very easy, just installing some dependencies and then copying the code from the mediawiki site. Again I made a symlink in the /var/www/html/ folder and set correct permissions. Running the web installer through the browser was very straight forward, and I made a 'hello world' page in the fresh wiki to celebrate. At this point I finished for the day after doing all this work through an SSH session, assuming the SSH server would start up automatically on the next boot.

My assumption was wrong though, when I turned on the Mac mini in a different room and tried to SSH into it, my connection was being refused. I soon found that by default, Ubuntu based systems don't connect to wifi, or start some services, until a user has logged in through the GUI. To fix this SSH issue, I started with simple fixes and worked my way through to more complicated solutions. As I wanted to make sure the SSH server was available right after the computer finished booting, the troubleshooting process involved restarting after each attempt to see if it worked. To make things more simple, I used a VGA monitor so I could log in to a user account and try some fixes that would have been much more complicated through terminal.

First, I made sure the wifi network information was in the network interfaces configuration file, which is loaded on start up. This didn't fix the problem, but it was clear that it was a necessary part. I then started adding the ssh service to startup with systemctl enable, and an entry in rc.local. This technically worked, but the SSH server was having problems with IP addresses being already used, which would cause the server to fail on start up every time. The problem seemed like it could have been caused by DHCP not allocating an IP to the interface before the SSH service was started, resulting in an inappropriate configuration (but this wasn't the case). This problem would have been huge to fix, so I moved onto more simple and recommended options.

Like my single board computer running as a webserver, I thought an option that could work (in a less secure way), would be to have the user login automatically on startup without needing a password or other input. Using the settings interface in Lubuntu, I changed the main account to not require a password on login, thinking it would be the same as the single board computer. I was wrong. Like many Linux distros, Lubuntu uses LightDM for the login screen, which means that even when a password isn't required, there is still some user input required from a mouse to click a login button. There was a greater problem now though, as the home folder for the main account was encrypted, I wasn't actually able to get into the desktop after clicking the login button, I was stuck in a login screen loop. With no way to supply the password to get in and change the settings (I couldn't enter the username without the password field disappearing), I tried logging in as root. I could get to the desktop with root, but the users and groups software locked up when I tried to use it. My first idea was to change the configuration of LightDM manually, something which isn't possible it seems, and reconfiguring the package didn't reset it to defaults.

I then had to take more drastic measures, uninstalling and reinstalling LightDM through the tty terminal. Uninstalling was easy, but when I tried to reinstall, the system was stuck at '0% connecting...' in the apt-get install script. After searching online, it seemed like it could have been caused by IPv6 settings, and as I could ping the IP address with IPv4 packets, this seemed a reasonable assumption. I then set to blocking and disabling IPv6 in various places, like the network interfaces config, and GRUB config. This didn't solve the problem, and soon after I thought maybe it was a problem caused by the Australian Ubuntu servers' connections. Sure enough after changing the sources list to the main link (without a country prefix), I was able to install LightDM again.

With LightDM fresh, I could login, fix the user settings, and get back to fixing the SSH problems. After a lot of head scratching I found a post by someone on Stack Overflow talking about a similar problem, but they mentioned they stopped the sshd service running at start up so 'only the ssh service was running.' From what I knew, ssh and sshd were different names for the same thing, but this suggested they were different beasts, and it could explain why the SSH server was having problems with taken IP addresses. After executing the simple systemctl disable command for sshd, I restarted the Mac mini and sure enough SSH was running without any user input necessary.

With SSH working it meant I could now put the server in a different room and work on it remotely, only needing a power cord plugged into it. Next to be installed was Nextcloud. Guides for installing Nextcloud were varied, and many mentioned installing through snap. Although I later found it to be a total waste of time, the snap installation process, and initial installation of Nextcloud was very easy. I didn't like the file structure of snap, or how it took control out of my hands, but it seemed to work. With help from a few guides I was able to add necessary trusted domains to a config file using (rather unusual) commands in terminal. What I really didn't like was how the new Nextcloud install completely took over the webserver daemon, making Apache useless, killing my wiki and phpmyadmin.

The real kicker was when the Nextcloud web installer got stuck trying to add the IP of the server as a trusted domain (something I had already done, it was shown in the config file). The first problem was that obviously the config file I changed was not read, and the second problem was that the web installer wanted me to go to a specific link from a browser on the server. I was using an SSH session, and I did not want to have to plug in a monitor, so I tried using wget, curl, and finally lynx to try and follow the link on the server. Nothing worked, and after some searching it seemed like snap installs do not allow anyone (even root!) to truly change files or gain control of everything. I completely purged both Nextcloud and snap, and started on installing Nextcloud manually from their website. This install was very straightforward and it was easier to take control. I could easily get Nextcloud running in a subfolder in the Apache folder.

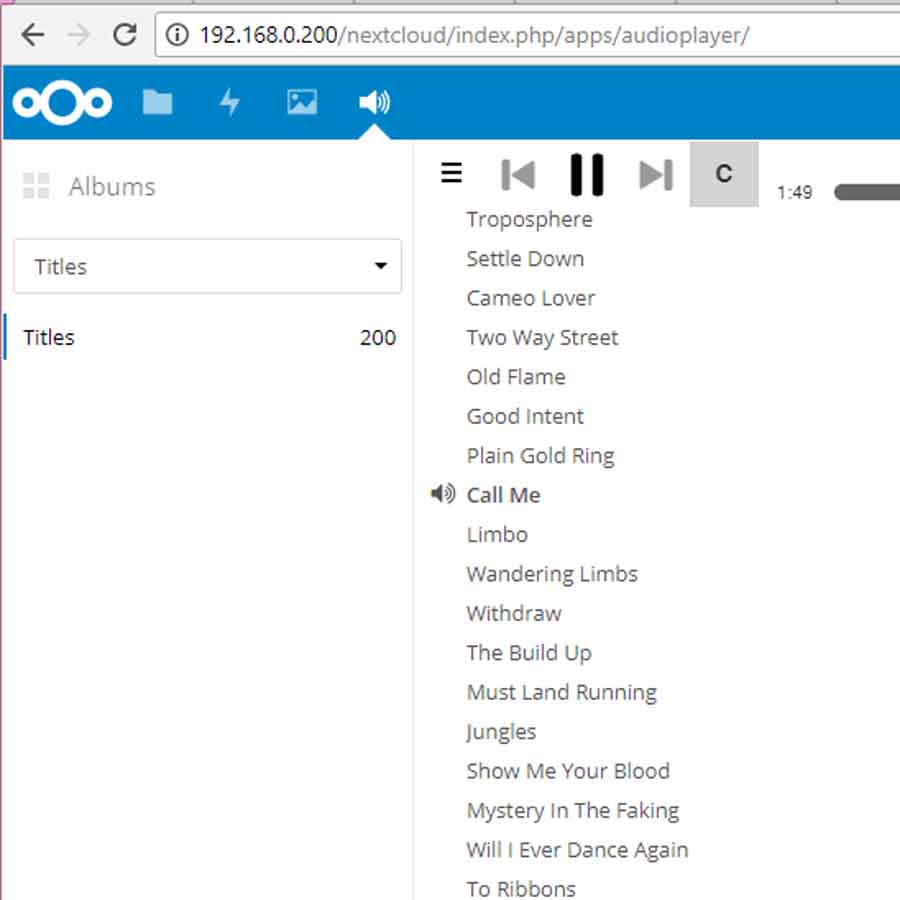

Soon after getting Nextcloud working well, I added an audio player app to it, to let me listen to music through a browser. It's a really nice feature and it's inspired me to look into adding more storage to the Mac mini (probably externally) so I can keep more music and photos on it. My future goals for the server are hardening security and maybe exposing it to the web.

Imageboard/Forum

In late 2017 in between trips around Japan, I started working on a forum with a friend of mine, based on the 4chan style imageboards that are all over the internet. We wanted to have an online forum for us and our friends that we could take control of that wasn't censored like facebook. We started on my friend's main website's hosting server, then we deployed it to its own new server in early 2018. For the privacy of the users on the site, I won't share the address or anything specific that could identify it, but if you ask questions I will try to answer as much as I can.

We have aimed to keep the system lightweight and fast, without any external libraries that would bloat everything. Originally the forum was designed to match the look and feel and UX of the GameFAQs forums, but after experimenting with different layouts and experiences, we agreed on a more 4chan style of formatting for threads. The boards and thread lists are displayed in a traditional web 1.0 style, which we felt is the most straightforward and best for the users' goals. As they say, if it ain't broke, don't fix it. The threads are displayed in the 4chan style to keep the reader's mental strain down, and to make quoting and following the thread easy.

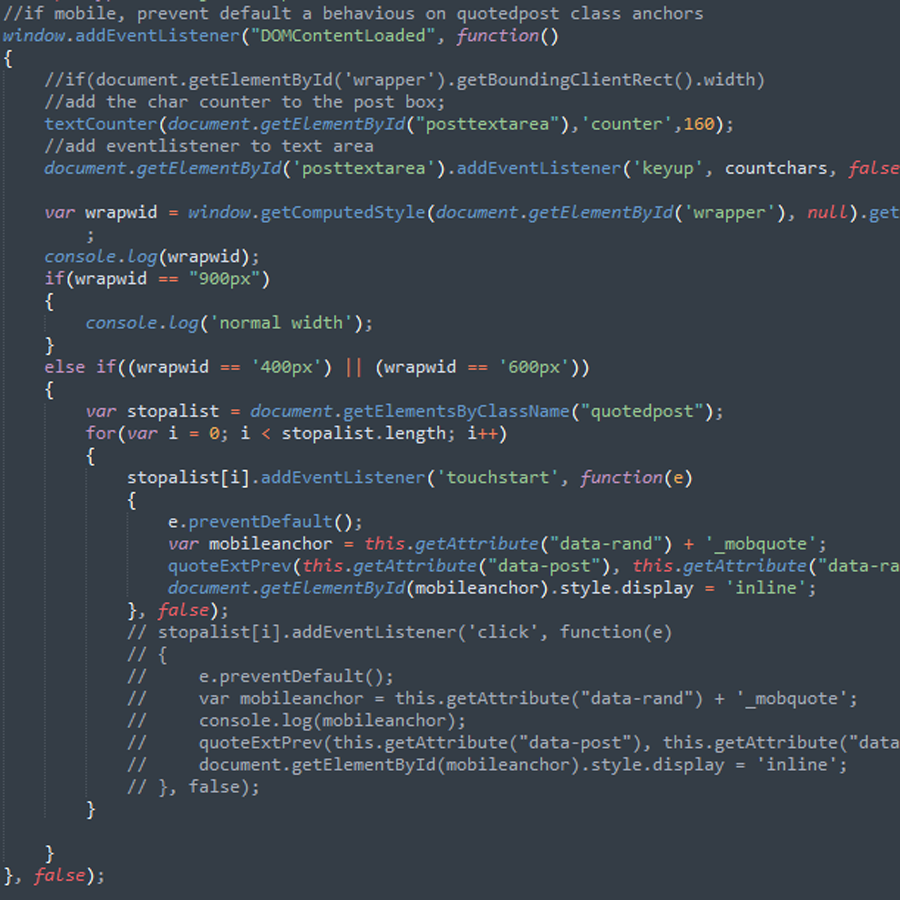

I developed a system for quoting posts, that develops inline JS to show mouseover previews of the quoted post, so users can see it without needing to scroll up the page or be faced with seeing the whole post every time it's quoted. My biggest problem with traditional web 1.0 forums was the wall of text that came with quotes, so this more 4chan approach worked much better. With a strong focus on mobile interactions I worked hard to make sure there was a similar mouseover function, with the JS staying flexible in the case of new laptops that have touchscreens.

We use a standard HTML/CSS/JS/PHP/MySQL stack running on a Linux server for the system. I make changes using SSH and FTP, two things I'm very comfortable with. The server is running an IRC server with SSL encryption, using InspIRCd and Atheme services, which was a bit of a pain to get working. The wiki pages for the software are dated and a bit random with their guides, but after much experimentation with configuration files (in nano in terminal) and rebuilding the software, I was able to get it all working. Now I just have to remember the IRC commands that I've forgotten (it's been a while since I've used IRC).

My friend and I have been adding features and improving the site continuously, and we plan on keeping these forums going for a long time.

Self-hosted Services (now obsolete)

Recently I've been trying to move away from using cloud services for my privacy and control over my files. A friend at a party mentioned he was running a service similar to dropbox on his own web server, which sounded like something I would find very useful. I decided the best test environment for self-hosted services like this would be the C.H.I.P. computer by Next Thing Co (a mediocre little single-board pc) running on my local network. I already have the computer running a web server and an IRC bot, so it was a good test bed for trying web services (even when I've underclocked the CPU to 200MHz). I have headless Debian running on it, so I SSH into it using Putty or Terminal.

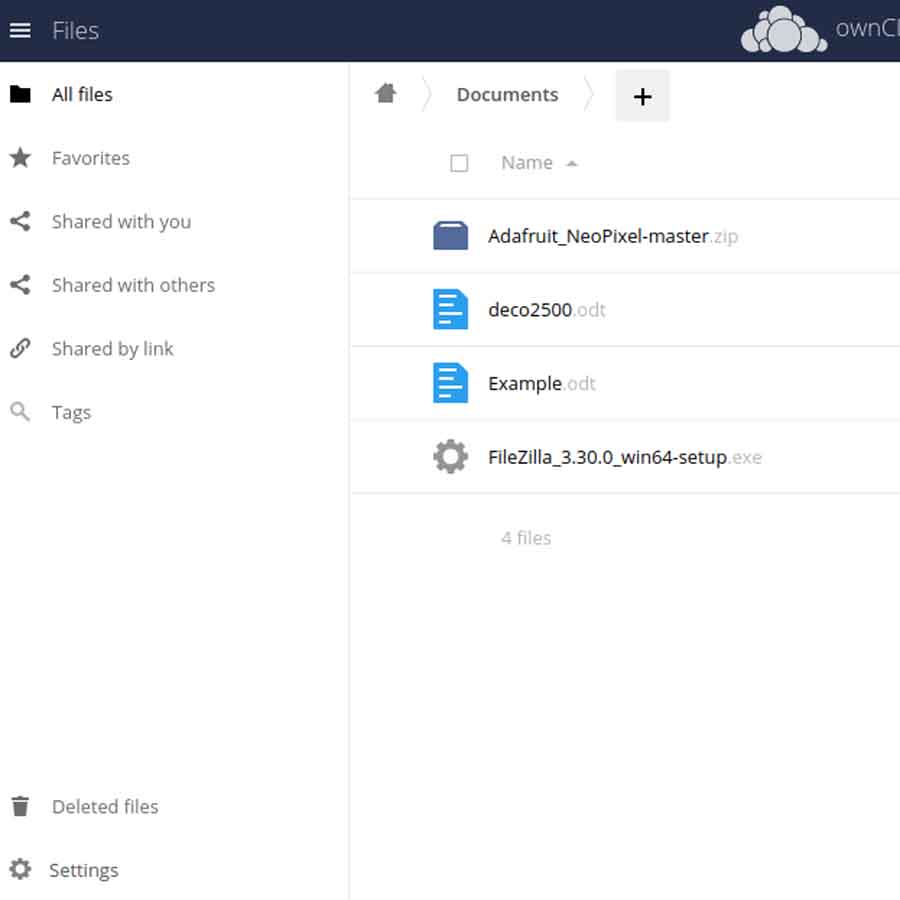

After searching online I found a quite popular dropbox/Google drive alternative, Owncloud. The setup was not as straightforward as it was supposed to be, a few dependencies I thought I had were not installed, and the web installer just didn't do a thing. I had thought PHP was installed, but I guess I didn't get around to that, so I installed it. I also somehow hadn't installed MySQL on the C.H.I.P. computer, so I got that installed. The manual install process was not too bad, the only issue was different installation instructions and guides gave different instructions. After making a symlink for apache to deliver the owncloud folder for [host address]/owncloud it all worked fine.

Running on a 200MHz computer, the Owncloud system runs slow, but it's acceptable enough because of the super low power consumption. It works really well as a simple place to store files when I need to swap OSs and I'm happy to have it stay on my network permanently.

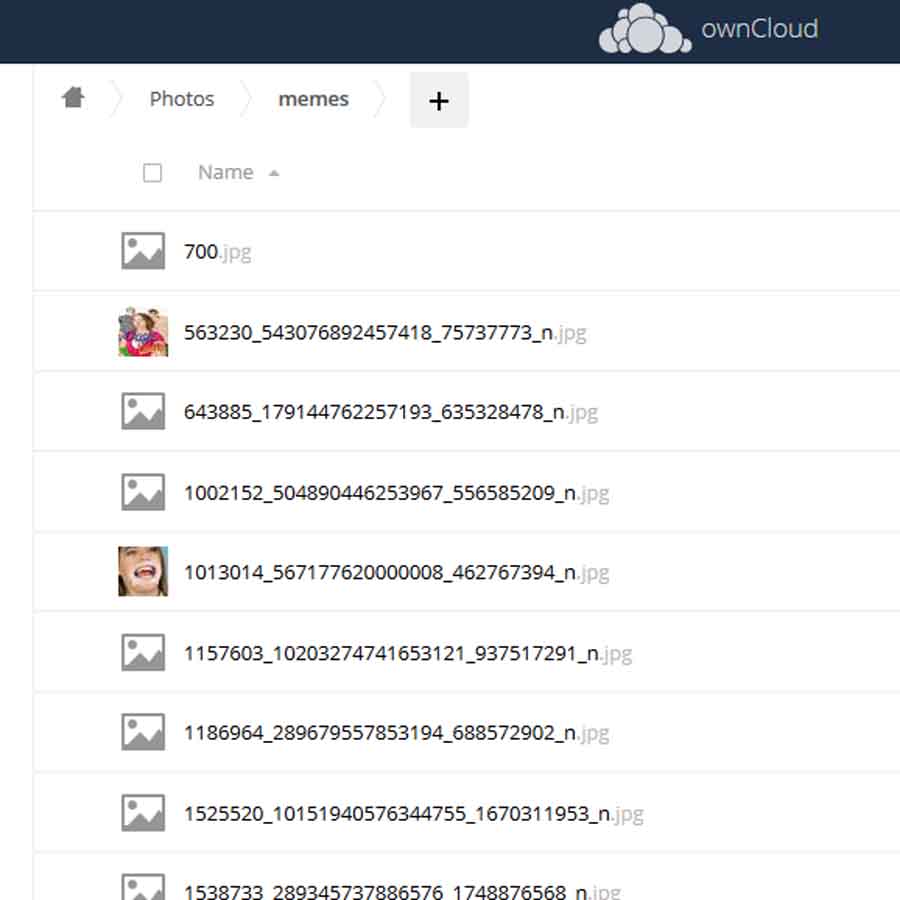

The interface design of Owncloud has a few problems, and I feel like they have tried to adopt a trendy minimalist design like what most other modern trendy sites have. An example of a fairly glaring issue in my opinion is the breadcrumbs that appear at the top of the page. As you can see in the picture, is has a fairly standard icon for home, with arrows showing the breadcrumb path to the current folder. The difference is that the arrows continue to the right of the current folder, pointing towards the + symbol to add a file or folder, which really messes with my mental model of the system. It makes me always doubt the location where I think a new file will be located. Will it end up in the same folder? Does the arrow indicate it will end up in a different folder? Even though I know it will upload to the same folder the arrow towards the + button throws me off. It's a fundamental feature that I feel has such a small but powerful issue with the design.

My next goal is to experiment with Matrix and Syncthing, both interesting projects that I'll install to a 'real' web server if I'm impressed with how they work.

Bad Boy Bot

On the IRC channel installed on the site above, I'm working on an IRC bot. A long time ago I wrote a bot for a discord server called 'Good Boy Bot' which would comment on things we said triggered by certain words, and I wanted it to be able to count the number of times we swore, but I never got round to it (half my group abandoned discord).

For the IRC server, my friend already decided he wanted to make Good Boy Bot 2.0, so I've decided to make Bad Boy Bot. Both bots are based on the Sopel framework, which is a Python based system that lets us build our own functions on top of the existing ones. I want Bad Boy Bot to stay in IRC channels with passwords, because when everyone leaves a channel, ChanServ doesn't keep it open with the +k mode, it opens the channel to anyone who joins, then it ads the +k. Not very secure. Good Boy Bot 2.0 is being developed on the website server, so I needed a different and persistent Linux build that could run the sopel software.

A long time ago I backed the Next Thing Co. C.H.I.P computer on Kickstarter, a $9 single board computer with VERY liberal marketing about it's abilities. Basically, they greatly exaggerated its speed, abilities, and compatibility so when I first got it it was hard to make projects run on it. I gave up on it for a long time but for the IRC bot I reflashed it headless and tried to make it work. Thankfully it finally worked and did what I asked it to, but it still got very hot while idling (running at 1GHz). I eventually found a script someone had written to slow down the CPU clock to lower the power consumption, and after trying it at an excruciating 24MHz, 200MHz worked well.

At the moment Bad Boy Bot is being developed on the chip computer, running with lower power consumption at 200MHz from a USB port on my wifi router. I've got some basic functions going where Bad Boy Bot responds in a funny way to certain words, but with some more time to think of ideas, I hope to make it have some cheeky functions to really make it the opposite of Good Boy Bot.